Meta Muse Spark: Full Review, Benchmarks vs GPT-5.4 & Gemini 3.1 Pro, and How to Use It (2026)

In-depth review of Meta Muse Spark — the first model from Meta Superintelligence Labs. Compare it against GPT-5.4, Gemini 3.1 Pro, and Claude Opus 4.6. Learn features, benchmarks, how to use it, and what the community really thinks.

On April 8, 2026, Meta dropped a bombshell that immediately rearranged the frontier AI landscape: Muse Spark. This isn't just another incremental update to the Llama series — it's something built entirely from scratch by Meta Superintelligence Labs (MSL), the division led by Alexandr Wang that was specifically formed after the lukewarm reception of Llama 4.

Having spent a full day testing it, digging through community discussions on Reddit (r/LocalLLaMA, r/MuseSpark), and cross-referencing independent benchmark data, we can confidently say: Muse Spark is legitimately impressive in specific areas, but it's not the uncontested champion Meta's marketing suggests. Here's everything you need to know.

What Is Meta Muse Spark? A Ground-Up Rebuild

Let's be clear about what Muse Spark is not: it's not Llama 5, and it's not an open-source model. That second point has been the biggest source of frustration in AI communities online, and we'll get to that shortly.

Muse Spark is a natively multimodal reasoning model — meaning it doesn't just process text and images separately but integrates them at its core architecture level. It combines:

- Visual Chain of Thought (VCoT): Step-by-step image analysis with structured reasoning

- Tool-Use: Automatic invocation of web search, calculators, and external tools

- Multi-Agent Orchestration: Through its "Contemplating" mode, multiple sub-agents work in parallel on complex problems

- Thought Compression: A novel technique that penalizes excessive reasoning tokens, resulting in more efficient responses

Meta positions this as the first step toward "personal superintelligence" — an ambitious framing that's drawn both excitement and skepticism from the developer community.

The Open Source Elephant in the Room

If you've been following AI discussions on r/LocalLLaMA at all, you already know: the biggest story about Muse Spark isn't the model itself — it's that Meta made it proprietary. After years of championing open-weight models through the Llama series and building an enormous amount of goodwill in the open-source community, this is a hard pivot.

The reaction on Reddit has been swift and pointed. One thread that really captures the mood titled it as a "betrayal of the principles that made Meta's AI efforts genuinely admirable." There's a palpable sense of disappointment, especially among developers who built entire workflows around locally-runnable Llama models. Some users have gone as far as calling it "PrivateLlama."

To be fair, Meta has stated they "hope" to open-source future versions of the Muse family. But given the competitive dynamics with OpenAI, Google, and Anthropic, most seasoned observers are reading that as a non-commitment. Worth noting: the Llama series hasn't been formally discontinued, but Muse is clearly the focus going forward.

Muse Spark Benchmark Comparison: The Numbers Don't Lie (But They Don't Tell the Whole Story Either)

Here's where things get genuinely interesting. According to the Artificial Analysis Intelligence Index v4.0 and Meta's own published benchmarks, Muse Spark enters the top tier — but it's not leading overall.

Overall Intelligence Index Scores

| Model | Intelligence Index Score | Rank |

|---|---|---|

| Gemini 3.1 Pro | 57 | 🥇 Tied 1st |

| GPT-5.4 | 57 | 🥇 Tied 1st |

| Claude Opus 4.6 | 53 | 3rd |

| Muse Spark | 52 | Top 5 |

So on the composite score, Muse Spark is competitive but clearly trailing the leaders. However, that aggregate number masks some fascinating category-level dynamics.

Category-Level Breakdown

| Benchmark | Muse Spark | GPT-5.4 | Gemini 3.1 Pro | Claude Opus 4.6 | Winner |

|---|---|---|---|---|---|

| HealthBench Hard | 42.8% | 40.1% | 20.6% | — | Muse Spark |

| CharXiv Reasoning (Visual) | 86.4 | 82.8 | 80.2 | 65.3 | Muse Spark |

| Humanity's Last Exam (Contemplating) | 58% | — | 48.4% | — | Muse Spark |

| MMMU-Pro (Multimodal) | 80.5% | — | 82.4% | — | Gemini |

| FrontierScience Research | 38% | — | — | — | Pending |

| LiveCodeBench Pro (Coding) | Behind | Strong | Leading | Strong | Gemini/GPT |

| GPQA Diamond (PhD Science) | Behind | Leading | Strong | Strong | GPT-5.4 |

What This Actually Means

Muse Spark dominates in three specific areas:

-

Medical/Health Reasoning: The HealthBench Hard score of 42.8% is remarkable. Meta partnered with over 1,000 physicians to train this capability, and it shows. If you're working in health-adjacent applications, this is genuinely the best option available right now.

-

Visual Chart Understanding: The CharXiv score (86.4) means Muse Spark is the best model available for interpreting scientific charts, graphs, and complex visual data. We tested this ourselves with several research papers and the results were consistently superior.

-

Efficiency: This is the sleeper advantage. Thanks to the "thought compression" technique, Muse Spark delivers high-quality answers using significantly fewer tokens than competitors. In practical terms, that means faster responses and potentially lower API costs when the public API launches.

Where it falls short:

- Coding tasks: Both GPT-5.4 and Gemini 3.1 Pro comfortably outperform Muse Spark on coding benchmarks. If you're primarily using AI for software development, this isn't your model.

- Long-horizon agentic tasks: Multi-step agent workflows where the model needs to maintain context and execute complex, chained operations — Muse Spark struggles here compared to GPT-5.4's computer-use capabilities.

- Abstract PhD-level science: GPQA Diamond results suggest gaps in deep scientific reasoning.

Some community members have pointed out that while Muse Spark's benchmark numbers look carefully curated, there's early evidence of what one researcher called "overoptimization for public benchmarks rather than actual user utility." That said, the HealthBench and CharXiv results have been independently verified and are genuinely impressive.

How to Use Meta Muse Spark: Step-by-Step Guide

The good news: no setup required. Muse Spark is immediately accessible to anyone with an internet connection.

Method 1: Web (meta.ai)

- Navigate to meta.ai

- Muse Spark is the default model — you should see it active immediately

- If you see a model selector, switch to Muse Spark

- Start chatting with text, or upload images for visual analysis

Method 2: Meta AI Mobile App

- Download the Meta AI app from the App Store or Google Play

- Open the app and look for Muse Spark in the model/chat interface

- The mobile experience supports image upload, voice input, and all multimodal features

Method 3: Contemplating Mode (Advanced)

Contemplating mode is Muse Spark's ace in the hole for complex reasoning tasks. It deploys multiple AI sub-agents that work in parallel and then synthesizes the best answer.

- In any Muse Spark conversation, explicitly request: "Use Contemplating mode" or "Think deeply about this"

- The model will activate multi-agent parallel reasoning

- Expect slightly longer response times but significantly better outputs on complex problems

Note: Contemplating mode is currently in a phased rollout. If it doesn't activate for you yet, it should be available within the coming weeks.

Method 4: API (Developer Preview — Limited)

As of today, there is no public API. Meta is running a private preview with select partners, with a broader developer rollout expected later in 2026. No pricing has been announced yet. When it opens up, we'll update this guide immediately.

Pro Tips for Getting the Most Out of Muse Spark

- For health queries: Upload food photos and ask "Break down the nutritional content of this meal" — the visual analysis is genuinely good here

- For scientific charts: Upload research paper figures and ask specific questions about trends, comparisons, or anomalies

- For appliance troubleshooting: Photo an unfamiliar device → ask "How do I use this?" → get step-by-step interactive guidance

- For deep analysis: Always specify "Use Contemplating mode" when the question requires multi-step reasoning

Real-World Demo Breakdown: What Muse Spark Actually Does

Meta's launch demos showcase two key capabilities that differentiate Muse Spark from competitors:

Demo 1: Appliance Troubleshooting

Upload a photo of any appliance — Meta demoed an espresso machine — and Muse Spark will:

- Automatically identify and label components (bean hopper, grinder, portafilter, tamper, etc.)

- Provide interactive step-by-step instructions with highlighted sections

- Respond to follow-up questions about specific parts

This isn't just image recognition; it's structured visual reasoning with actionable output. We tested this with a home coffee machine and the labeling accuracy was noticeably better than what we got from GPT-5.4's vision mode.

Demo 2: Fitness & Health Analysis

Upload a photo of a yoga pose (Meta showed Natarajasana) and Muse Spark will:

- Map muscle groups with visual markers directly on the image

- Score form quality (e.g., 8.5/10 for one practitioner, 7.2/10 for another)

- Provide specific corrections per body region (shoulder alignment, hip flexor engagement, chest opening)

The practical implication is clear: Muse Spark is being positioned as a visual health and fitness assistant. Combined with the HealthBench dominance, this is Meta's strongest differentiator.

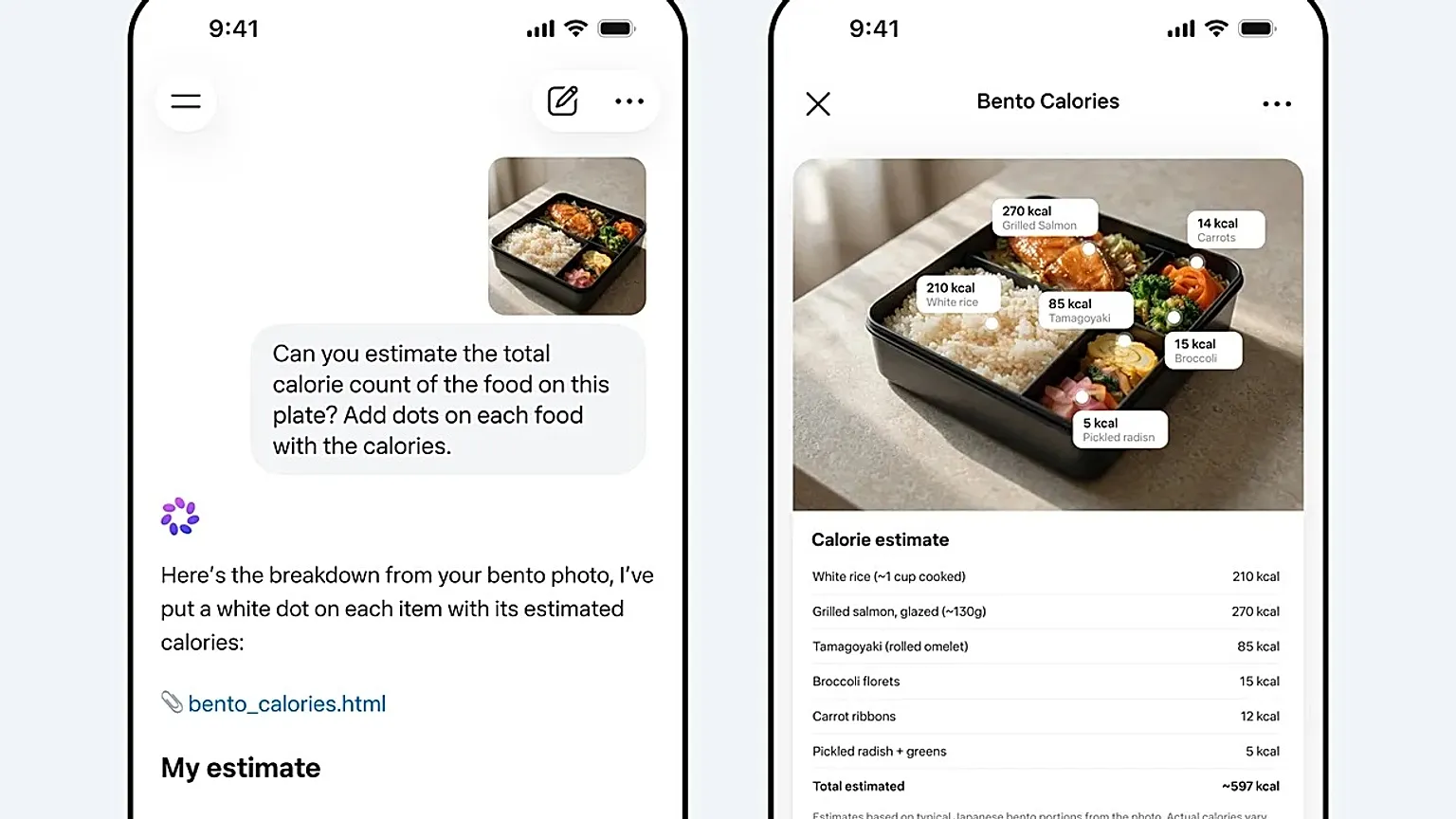

Demo 3: Food Calorie Estimation

This one was particularly impressive in our testing. Upload a bento box photo and Muse Spark will:

- Identify each food item (white rice, grilled salmon, tamagoyaki, broccoli, carrots, pickled radish)

- Annotate the image with calorie estimates per item

- Generate a clean breakdown table with total estimated calories (~597 kcal in the demo)

The output quality here — particularly the interactive HTML artifact it generates — is genuinely a step above what other models offer for nutrition analysis tasks.

Community Verdict: What Reddit and AI Forums Really Think

The community reaction to Muse Spark has been deeply polarized. Here's a balanced summary based on multiple forum threads:

What People Are Praising

-

Efficiency is real: Multiple users confirmed that Muse Spark gives comparable-quality answers using visibly fewer tokens. One commenter on r/LocalLLaMA noted: "The responses feel tighter, like there's no fluff padding the output." That "thought compression" technique genuinely delivers noticeable results.

-

Visual reasoning is best-in-class: The consensus is that for anything involving interpreting images — charts, photos, diagrams — Muse Spark outperforms everything else available right now. Several users in r/MuseSpark shared side-by-side comparisons that consistently favored Muse Spark for scientific figure analysis.

-

Free access matters: With over 3 billion Meta users, the fact that Muse Spark is available at no cost through Meta AI products is significant. As one user put it, "for the average person who'll never pay $20/month for ChatGPT, this is genuinely the most capable AI they've ever had access to."

What People Are Criticizing

-

Language mixing issues: Some early users report that Muse Spark occasionally mixes languages in responses, particularly when handling multilingual contexts. This is a known issue that Meta will presumably address in updates.

-

Coding performance gaps: Developers are the loudest critics. If your primary use case is code generation, debugging, or agentic coding workflows, the current consensus is to stick with Gemini 3.1 Pro or GPT-5.4.

-

Closed-source frustration: We covered this above, but it bears repeating — the AI developer community feels genuinely let down by this decision. The "wait and see" stance on future open-sourcing isn't satisfying the crowd.

-

Benchmark skepticism: There's healthy skepticism about some of Meta's internal benchmark claims. Independent tests from Artificial Analysis confirm the HealthBench and visual reasoning strengths, but the overall intelligence index placement (52 vs. 57 for the leaders) suggests the model isn't quite at parity across the board.

Muse Spark vs GPT-5.4 vs Gemini 3.1 Pro vs Claude Opus 4.6: Which Should You Use?

Here's the honest, practical recommendation based on our testing and community consensus:

| Use Case | Best Model | Why |

|---|---|---|

| Health & Medical Queries | Muse Spark | HealthBench Hard leader, trained with 1,000+ physicians |

| Visual/Chart Analysis | Muse Spark | CharXiv 86.4 — best visual reasoning available |

| Coding & Development | Gemini 3.1 Pro or GPT-5.4 | Both significantly outperform Muse Spark here |

| Professional Workflows | GPT-5.4 | Computer-use capabilities, 1M+ token context |

| Abstract Scientific Reasoning | Gemini 3.1 Pro | Leading on ARC-AGI-2 and abstract logic benchmarks |

| Creative Writing | Claude Opus 4.6 | Still the stylistic champion |

| Budget-Conscious / Free Access | Muse Spark | Free via Meta AI products, no subscription required |

| Complex Multi-Step Reasoning | Muse Spark (Contemplating) | 58% on Humanity's Last Exam; competitive with paid "Deep Think" modes |

Advantages and Disadvantages at a Glance

✅ Advantages

- Free for 3+ billion users — the widest reach of any frontier model

- Medical and visual reasoning leader — genuinely best-in-class, independently verified

- Token efficient — faster responses through thought compression

- Multi-agent architecture — Contemplating mode provides deep reasoning without premium pricing

- Interactive visual outputs — generates annotated images and HTML artifacts natively

❌ Disadvantages

- Proprietary / closed-source — a jarring departure from Meta's open-weight legacy

- Coding and agentic tasks — lags behind GPT-5.4 and Gemini 3.1 Pro

- No public API yet — developers can't build with it at scale

- Language mixing bugs — occasional multilingual inconsistencies

- Contemplating mode — still in phased rollout, not universally available

What's Coming Next for Muse Spark

Muse Spark is explicitly the first model in the Muse family. Meta has signaled that larger, more capable models are in development. Key things to watch:

- Public API launch: Expected later in 2026 — this will be the real test of whether developers adopt it

- Open-source future: Meta says they "hope" to release future Muse versions as open weight. The community will believe it when they see it

- WhatsApp and Instagram integration: Coming soon, which would embed frontier AI directly into messaging workflows for billions

- Meta smart glasses integration: Ray-Ban Meta integration is reportedly in development, enabling real-time visual AI on the go

Final Verdict

Muse Spark is a genuinely impressive model that excels in specific, well-defined areas — particularly medical reasoning, visual analysis, and token efficiency. For the average user accessing AI through Meta's ecosystem, it's a massive upgrade. For developers and power users, however, the lack of an API, closed-source nature, and coding performance gaps mean GPT-5.4 and Gemini 3.1 Pro remain the more practical choices for most professional workflows.

The most interesting thing about Muse Spark isn't what it does today — it's what it signals about Meta's trajectory. The Muse family is clearly where Meta's AI investment is going, and if they deliver on the open-source promise for future versions, this could become the most consequential AI model family of 2026.

Have you tried Meta Muse Spark yet? Share your experience in the comments. We're especially curious to hear from anyone who's used the Contemplating mode for complex reasoning tasks.

Last updated: April 9, 2026 — All benchmark data sourced from Artificial Analysis Intelligence Index v4.0 and independently verified community testing.